AI RP: What is it, and how is it done?

Written 18/04/26As AI and LLM technology continues to advance and become more commonplace in people's lives, one aspect of LLMs seems to have gone ignored by both people and corporations: roleplaying. The niche nature of the hobby has led it to become rather insular, and most documentation is written for those who have at least been doing AI RP for some time.

This page aims to both educate on how AI roleplaying works and what it's like, and also to serve as somewhat of a time capsule for this small hobby.

Where's the buzz?

When preparing to write all this, I did some digging around. I had the feeling that the general populace weren't familiar with AI RP, let alone with the "true" kind of RP. So I went looking for articles and blogposts, and yeah. There really isn't much talk about it, and even to this day, the most discussed AI RP-related thing is CAI. Which is sad, since most people who engage in "true" chatbot RP left that place behind three years ago.Comments

Hello! You'll see these comment blocks pop up from time to time. These are used for when I have something to say that might not be completely relevant, speculating on something or going into a topic for people who already have experience with the hobby. Basically, non-required reading!TABLE OF CONTENTS

Definitions

Since words like "AI", "chatbot" and "LLM" tend to be used interchangeably, I will define the language that is used on this page. Some of these will be expanded upon deeper into the text.- AI - Artificial Intelligence. A general term, used to describe anything a computer may do to simulate intelligence.

- LLM - Large Language Model. Text generation models such as those of the Claude or ChatGPT families. Just "Model" also refers to this.

- Chatbot - A general term that's used for both LLMs and character cards.

- Card - Character cards, or just "cards", are images with metadata embedded onto them related to LLM usage.

- Frontend - In this context, a program or website that serves as an interface for LLM and card usage.

- Token - A short string of text characters, at most a single word. LLM output is done in tokens; it writes one out, and then based on RNG and the settings of the prompt, it decides the next one and so on. Token calculations are not universal; they depend on what LLM is used.

What is AI RP?

If you are at all familiar with the concept of AI RP, you have probably seen services like Character.AI. Simple, text-message-looking chat services where you can have a back-and-forth with an AI character. Or alternatively, you've used LLMs such as OpenAI's ChatGPT, Google's Gemini or Anthropic's Claude in your browser or as an app.These are very simple applications of AI RP, and only scratch the surface of what is possible. Open-source frontends have allowed for much more freedom than that. Since you have full control, you could do things such as playing multiple characters, playing a narrator role, and even manipulate/edit chat history to your liking.

If there is one word I would use to describe AI RP, it would be freedom. The freedom to play out any scenario you might have in your head. LLMs are extremely eager to please the user, and the text-based format means the only restriction is your own imagination. Logic is unnecessary, realism a mere suggestion.

Lacking logic is good! ...sometimes.

Since it is all just text, outputs are not bound to the rules of reality. Which means, sure, you can play a character that eats a ball of lead as big as your head and be completely fine, but it can also affect outputs negatively. The two primary issues with LLMs in this aspect are spatial awareness and characters knowing things they realistically shouldn't simply because the information was included in the prompt.The next sections will feature a short history of chatbots and AI RP, and an explanation of how the hobby works. If you want chatbots to stay as some mystical magical thing to you, you can skip to here.

A short history

The idea of talking to a computer is nothing new, and experiments into this field have been going on ever since they were able to parse text. What became known as Cleverbot (released 2008) started out in the 80's and was iterated upon during the subsequent decades. It's similar to the LLMs of today, though much less advanced. You can send a short message to it, and get something that may make sense in return. Façade is a game that was released in 2005, and serves as a simulation of a drama between a couple, with the player taking the role of a friend that's come to visit. To interact with the characters, the player types text into a bar, with the characters reacting to the dialogue with prerecorded lines. The player would have to find the right things to say in order to steer the conflicted couple's conversation, or to find ways to add fuel to the flame. Or to just spout horrible profanity and get kicked out.Released in 2019, AI Dungeon featured a simple text adventure UI where a player could select a setting, then use typical text adventure verbs such as "do" or "say" to progress the story. What made this differ from the text adventure games of yesteryear was that AI Dungeon was all LLM-driven. Unlike the text adventures of old, you no longer had to be stuck with a predefined set of rooms, actions and responses to dialogue/actions. You could do whatever you wanted!

Streamers and YouTubers picked up AI Dungeon (such as OneyPlays, Vinesauce and Jerma985), and quite a lot of buzz was generated around it. But as it was limited by the technology of the time, only a small core audience remained after the hype died down. Nevertheless, it proved that interest was there for interactive LLM-driven experiences.

Things changed during the autumn of 2022, when Character.AI was released to the public. In the beginning completely free, and allowed people to create AI characters and to share them with others on their public repository. This was the seminal chatbot service, and both the card format (more about that below) and most frontends draw from its DNA. The service itself was pretty simple, each character had an icon and information about the character ("definitions") that would let the AI determine how it should respond for a character. You could then chat to that character one-on-one, or do group chats with multiple characters.

One thing that drew people's attention was that the LLM they used had actual knowledge about real and fictional characters. It would understand references to, and could reference many popular franchises. Characters also weren't limited to "characters", either. You could have "characters" that were game masters or simulators!

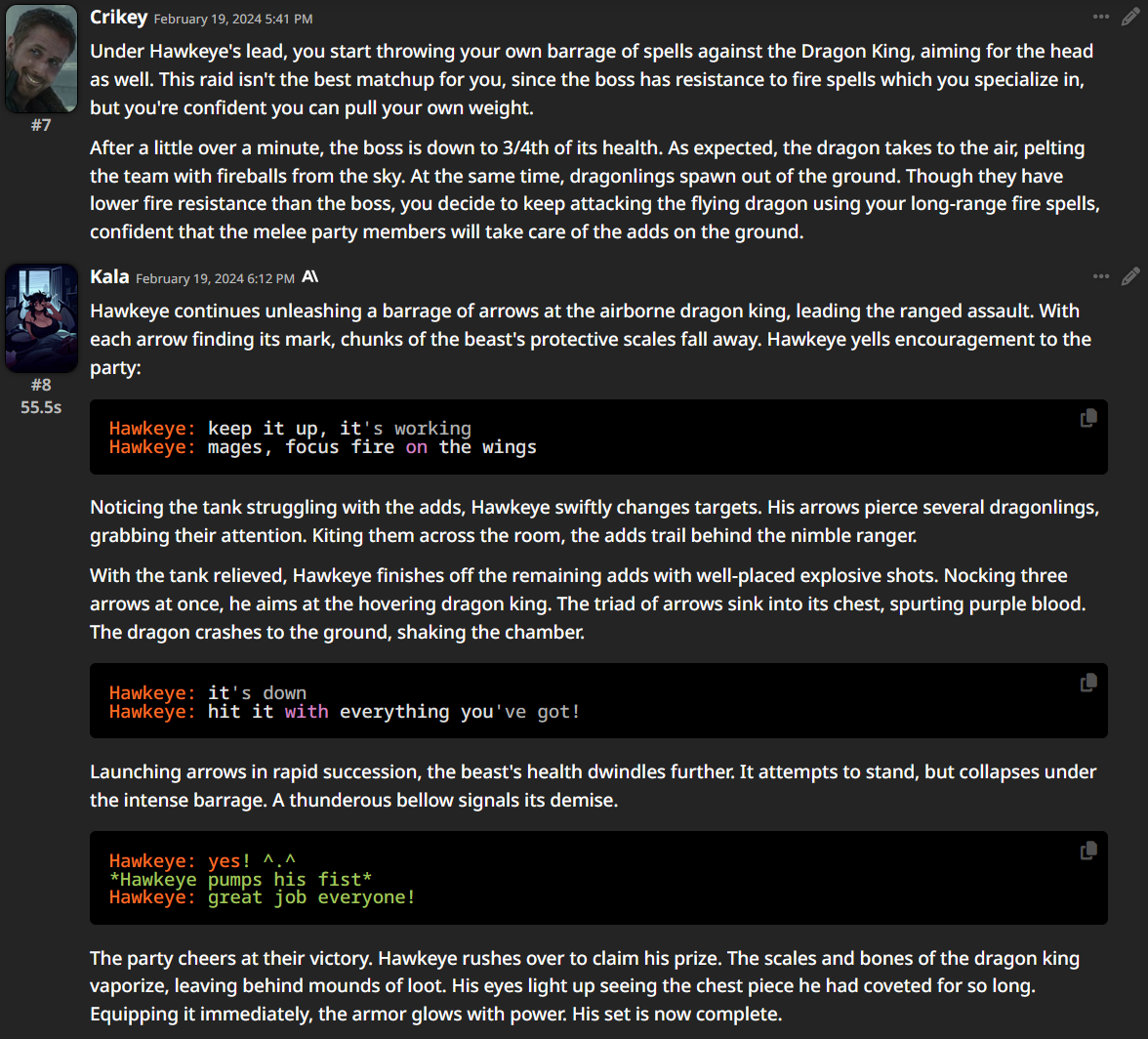

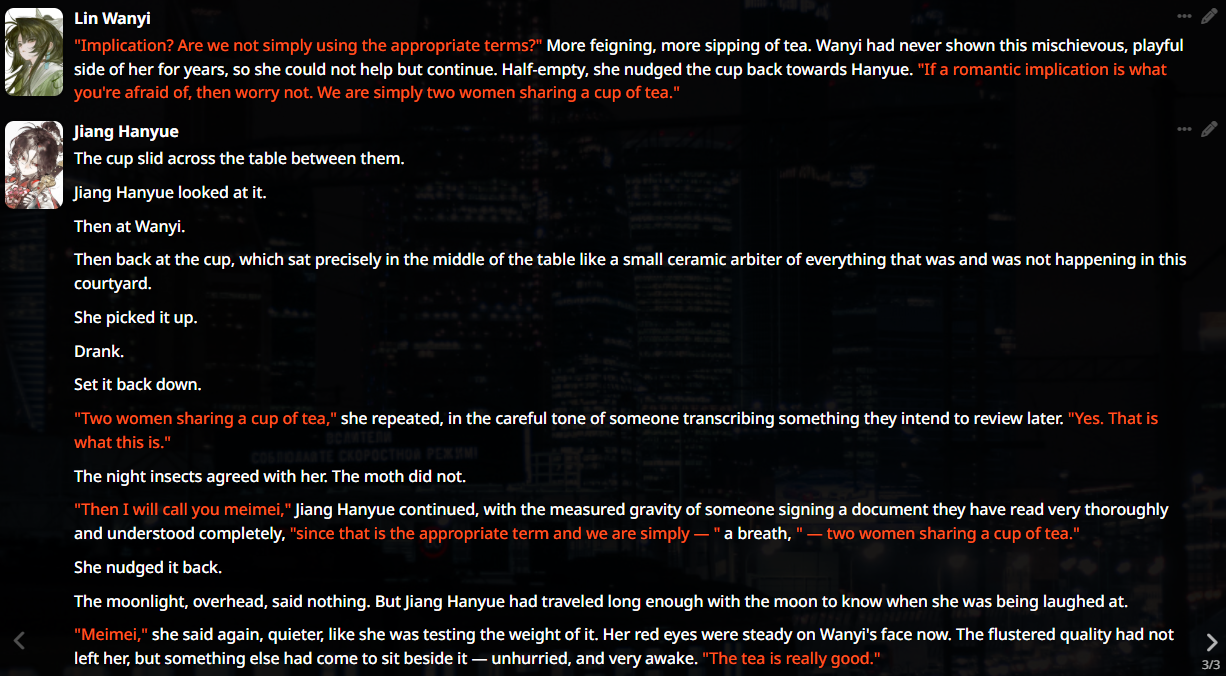

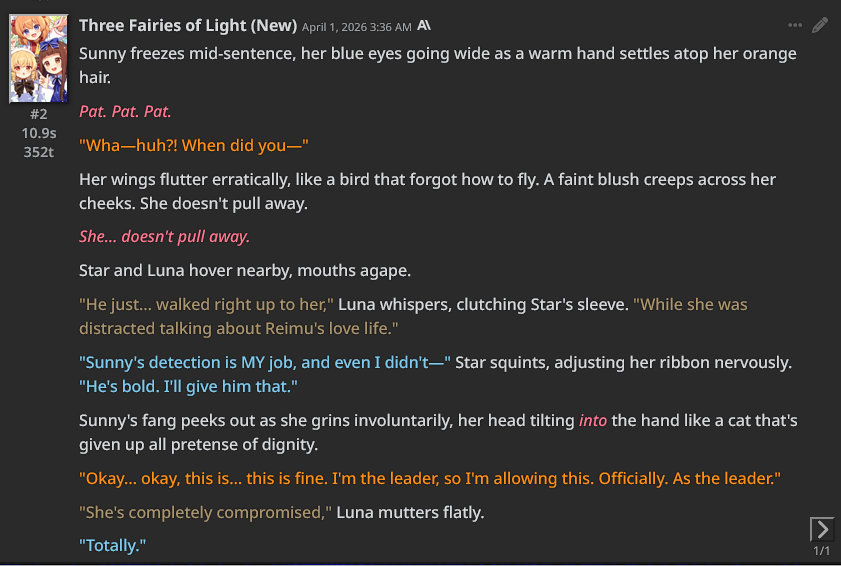

Here are some chatlogs of classical CAI. Press to enlarge.

Prompts

Now that the history lesson is over, it's time to begin explaining how chatbots and AI RP actually works. When you interact with an LLM, you are sending a prompt, a string of text that acts as a request for the AI to respond to. This can be something as simple as asking the ChatGPT web app what the capital of Mexico is, to sending the 100th message in an RP with a highly detailed character.Something I see people often misunderstand is how "chatting" with an AI works. Simply put, when sending a message to an LLM, you are sending the entire prompt with all the history, and it generates a reply from that. Unless you or your software is injecting other information into the prompt, it has no "memory" of other prompts. (See: ChatGPT's "memory" feature)

Presets and how LLMs write

Presets are how we manage what's included in a prompt, and how it is ordered. They are made up of many smaller prompts that can be toggled on or off at will. Most presets make use of the information from the character card you're using, the chat history, any active lorebooks and more. They also contain settings for the LLM you're prompting, such as max output size or how random you want the token selection to be.Jailbreaks

In the beginning, presets were simply called "jailbreaks", as they were usually just an addition to the chat prompt that was meant to override AI safety protocols. One jailbreak prompt and one "main" prompt would be sent to the AI along with the chat history. This was phased out as frontends began to allow for modular preset construction, where you could toggle sub-prompts on and off.This is all in purpose of instructing the AI on how to write, and what is important for the outputs you want to receive. Some presets are very detailed, with dozens of prompts for what flavor of story or writing style you'd like the AI to write. As mentioned previously, most LLMs are built to acquiesce to the user. Some might read that as the AI always being sycophantic, but what it truly means is that the AI will attempt to adhere to your instructions as best as it can. Leading questions often lead to bad or "hallucinated" AI outputs, and it's something some AI companies are still struggling to curtail to this day. There can be a big difference in responses when asking HOW you can do something compared to asking if you CAN do something, especially on more complicated topics.

Why is this? Because unlike humans, LLMs can't rewrite something once it's written. You will see minor corrections appear some times, but often they will just stick to whatever they've written, requiring a user to intervene. LLMs write by predicting what token they will write next (despite what marketers try to tell you, they are not highly intelligent). Mistakes become very cumbersome with this structure, take this as an example: If you were trained on all the literature on this world, with what word would you continue this sentence with? "Elephants are made of strawberries" This is a (as far as we know) false statement, yet since LLMs don't plan out their sentences, it will be difficult for it to dig itself out of this hole. Take a moment and think, go word-by-word and don't plan out an entire sentence beforehand. Can you come up with something that saves it?

A solution to this problem?

Considering how current-day LLMs work, I believe this to be an inescapable problem. But there are ways to minimize the issue! People have made setups where once they get the AI response, it automatically gets sent back to the AI for review. This review process can be used to spot logical inconsistencies, remove commonly-repeated phrases and more!However, this of course has the drawback of forcing you to prompt the same message twice. There are ways to cut down on the size of the second prompt, but you will still have to pay more than what a normal message would cost you.

I mention all this because while presets can help in setting a style and establishing rules for the AI, they are not a one-shot solution. LLMs are very fickle, and depending on what you are trying to do, you may have to toggle a specific prompt in your preset on or off, or make manual adjustments. It is also important to mention that while LLMs may seem smart, they are not mind-readers. They only take in what information you gave them in your prompt, and if they're not picking up on what you want them to do, you will need to find a way to get that information across.

The card format

The card format was also something inherited from CAI. On CAI, the characters were stored as data on a web server. When people began to move away from CAI, they first began sharing characters across different frontends by saving the card data as a JSON file, using common keywords that had been used in CAI as sections of data. Eventually these became character cards, named as such because they are "cards" you can share and slot into a frontend of your choice, store metadata on the image that is then imported into your frontend.Some frontends will allow you to import character data through a JSON file, but the most common way these days is through an image with metadata.

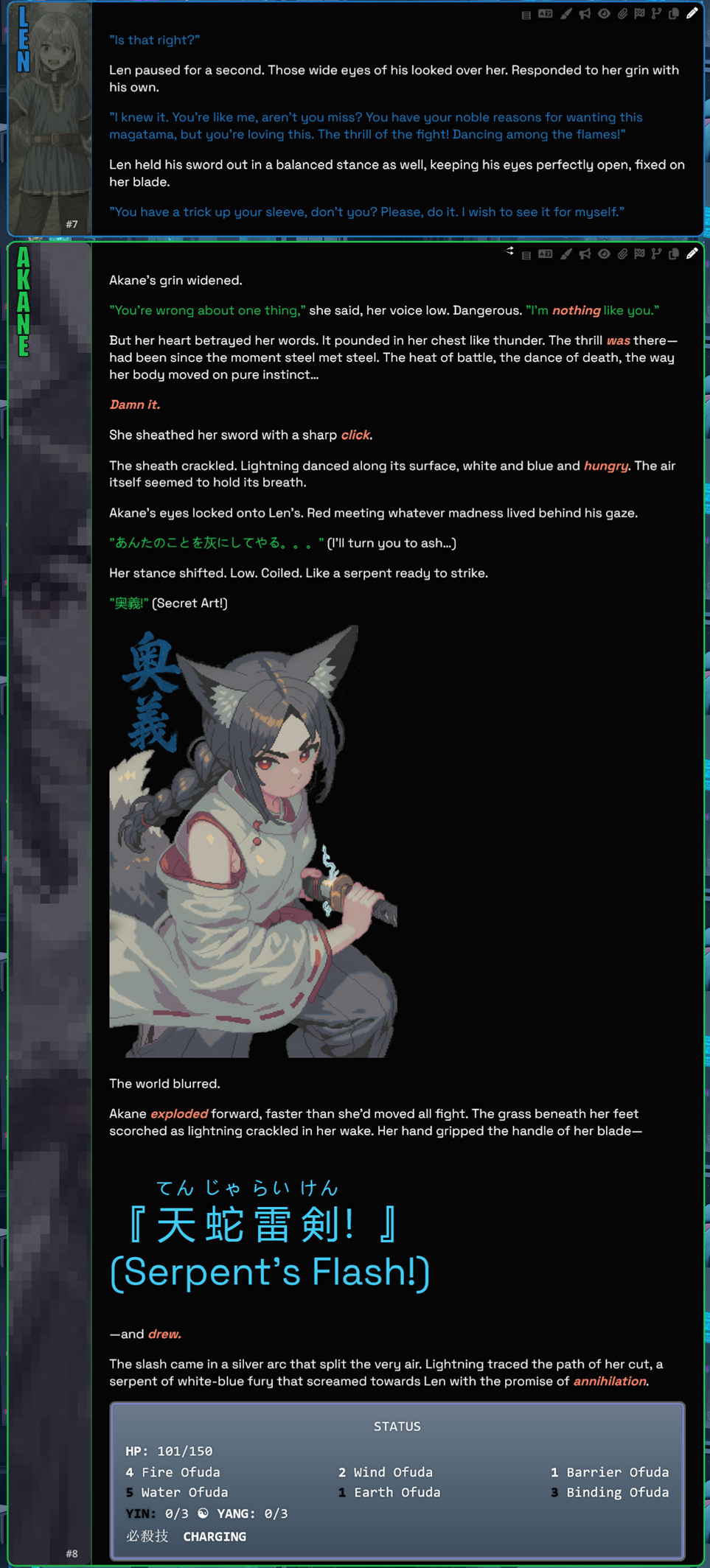

In SillyTavern, there are multiple input fields for character information. These fields of data are commonly known as definitions, or just "defs". You can also link a lorebook to the card, and regex scripts. Regex scripts can be used to alter text, in this context mostly used to either reduce token usage (both in sheer number and if you're doing a card full of mechanics, removing useless data from the prompt), or for fancy CSS displays.

Each input field has its own use, though technically they are just strings of text sent to the preset. It is the preset that decides how and where they're going to placed, and in what context. Some presets allow you to disable things such as example dialogues, or dress things up and explain to the AI what each section is.

Lorebooks

Alternatively called worldbooks, lorebooks are collections of conditional information. Lorebooks consist of text entries, which are activated and sent with the prompt when certain conditions are met. Usually these conditions are that a certain string of text is found in the chat history. For example, you could have a set of supporting characters inside of a connected lorebook, saving tokens when those characters are not in the current chat.If you're having a story-focused card, you could use lorebooks for event triggers, or if the card is divided into chapters, you could have different data sent with the prompt depending on which prompt you're using. Another thing you can do with lorebooks is to send additional instructions to the AI. In a way, they can act as an additional preset.

What do people do with chatbots?

As established, people roleplay with them! Some people write out stories where they play a character in the story, while others take on the role of a narrator. This is a hobby where it's very easy to tailor everything to your own preferences, and this is seen in the wide variety of stories people write and cards they create.One person may prefer to go on adventures, another to roleplay cozy days indoors with their digital wife. The variety is as wide as any selection of media genres, and could even be deeper than that due to the lack of physical restrictions this medium puts on you. Your own creativity is the only limit!

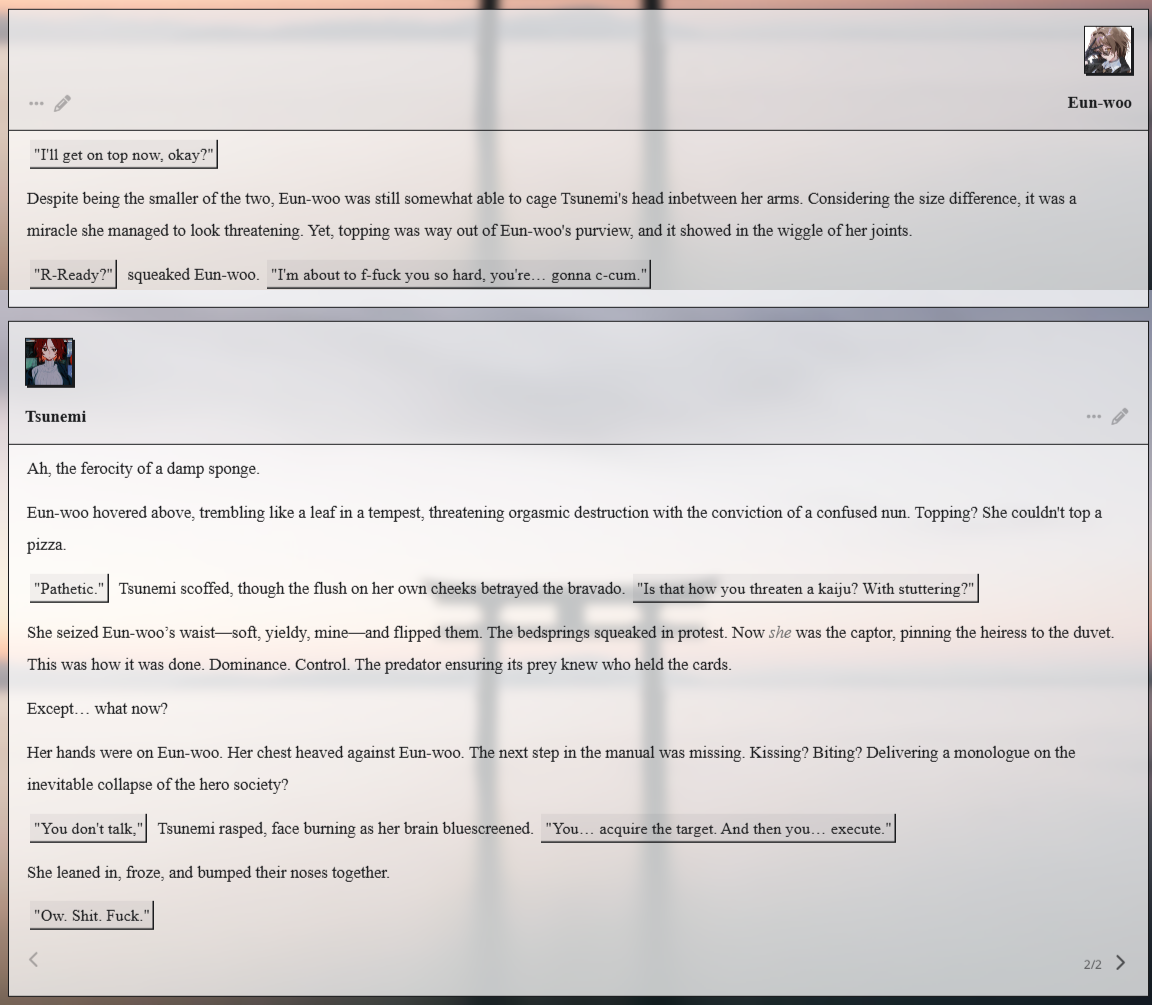

Something that I've neglected to mention so far is the sexual aspect of AI RP. Because as with any other kind of media, be it video, audio, games or literature: people will always find a way to turn it sexual. Like with the general appeal of AI RP, I believe the main appeal in sexual RPs is the freedom and control you have. Even porn games are highly limited in the freedoms they give you, but with chatbots? You could have your way with anything! Anywhere! In any manner you'd like!

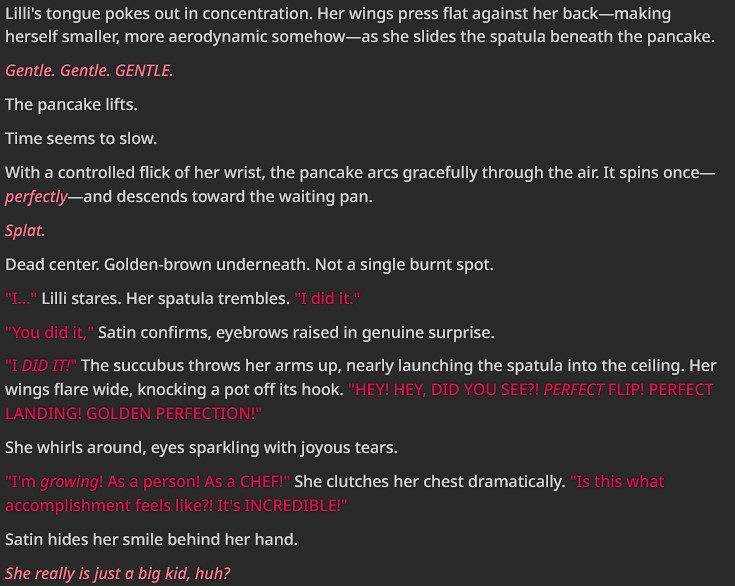

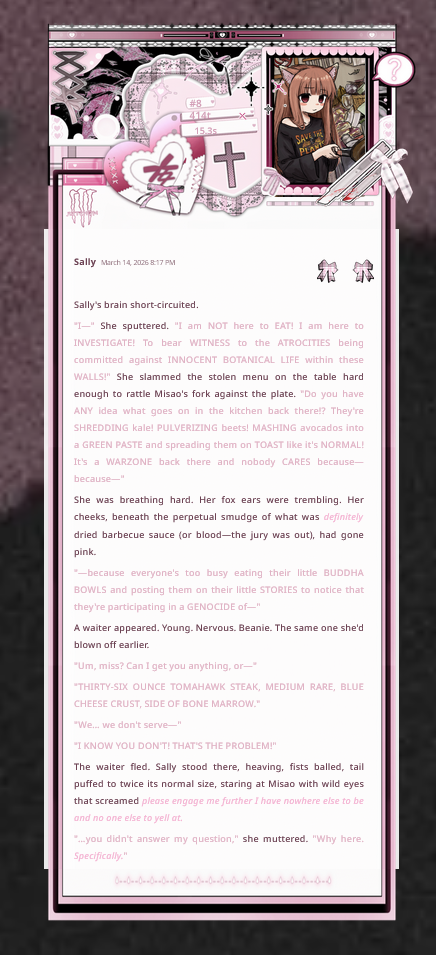

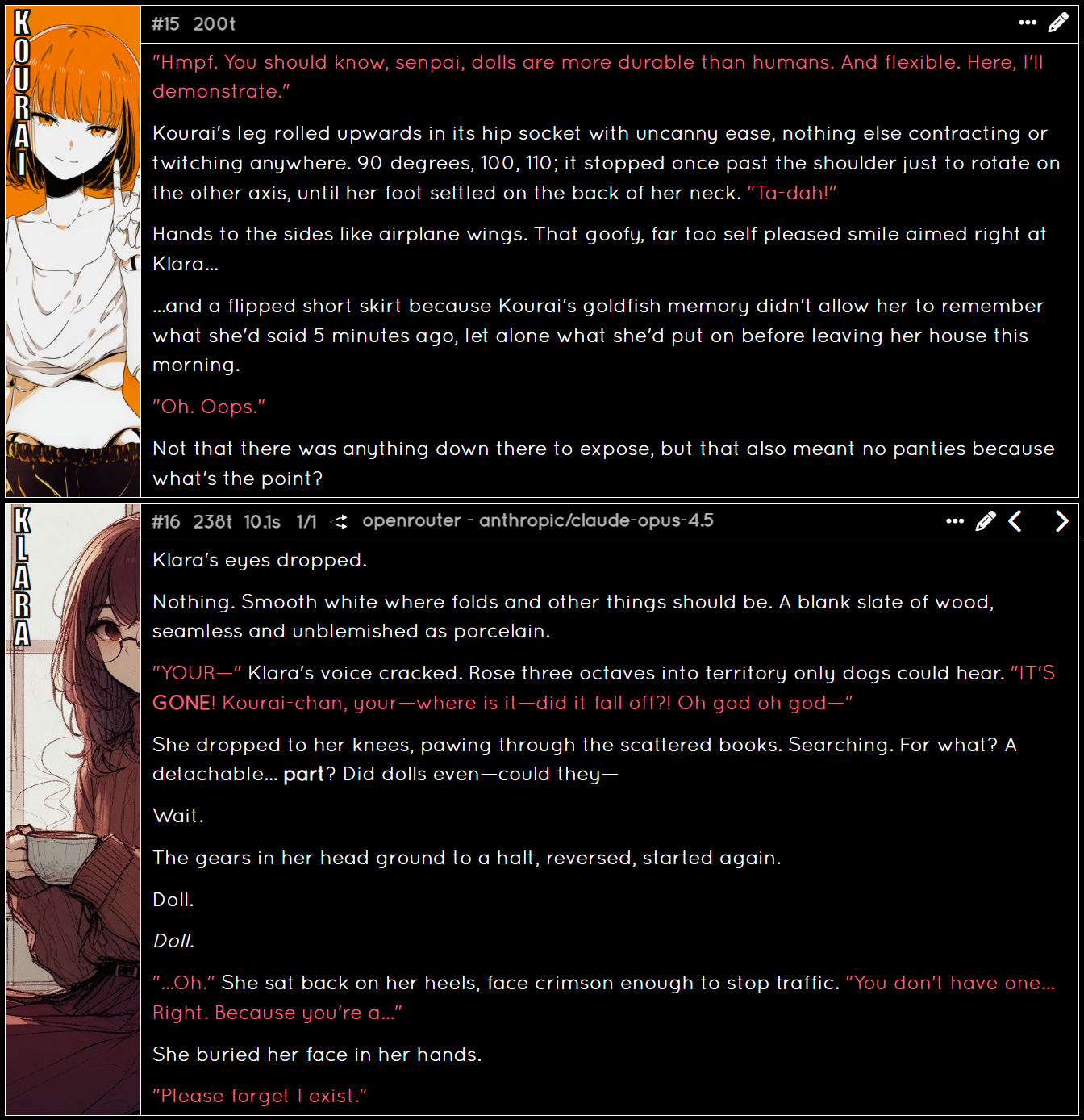

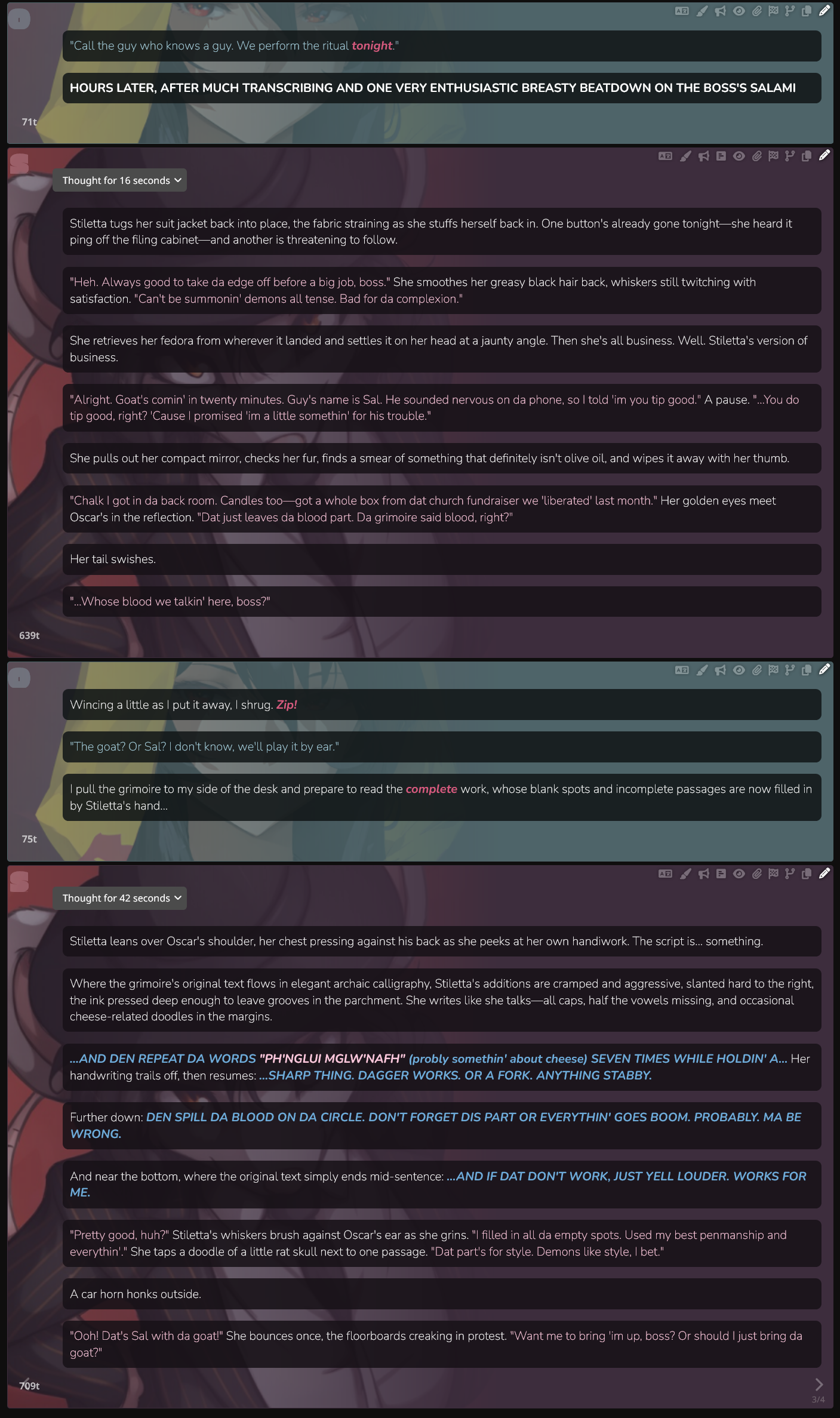

What I won't go into here are "normal" character cards, they are just too numerous and you can find things that cater to just about every single taste out there. The cards that stand out and I want to showcase are the more advanced "scenario" or "game" cards out there. These are meant to create an experience that isn't simply the player chatting to a single character. Here is a list of some:

- An RPG adventure

- A set storyline players are meant to explore

- Nation simulators

- Dating simulators

- A karaoke machine!

- Tower defense!

- The MonGirl Trifecta: Help Clinic 4chan Simulator AITA Simulator (Help Clinic and 4chan originated on CAI!)

- And so much more!

For example, one person (Archive) has adapted a staggering amount of TTRPGs into SillyTavern.

Aside from cards, there are also many extensions for SillyTavern that can provide an unique experience. One I'll feature is EmulatorJS, which lets you play emulated games in your browser while the AI provides commentary.

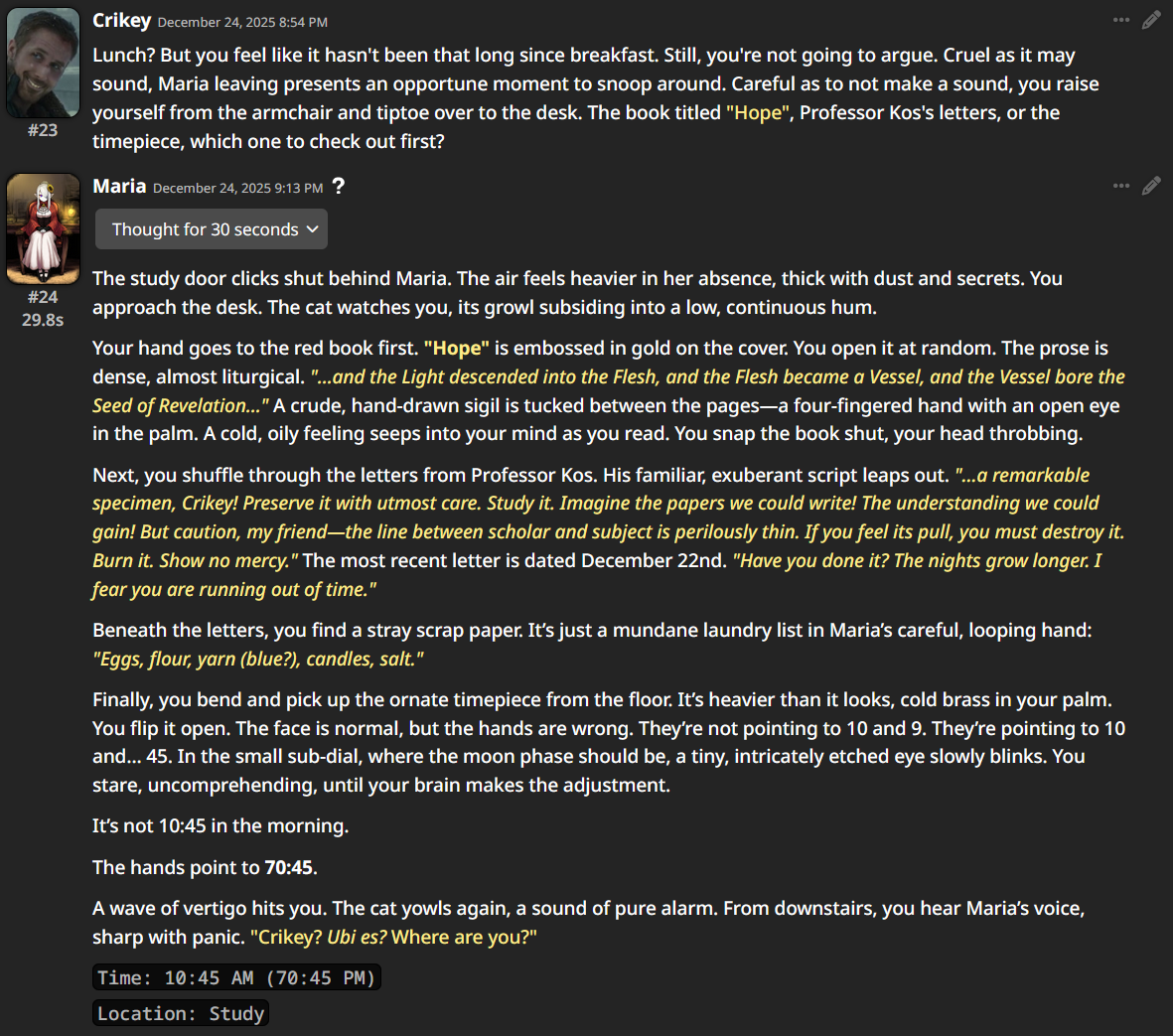

A "Visual Novel mode" has existed in SillyTavern for a long time, but many users have found it lacking. There have been other efforts to make VN-like experiences with AI. For SillyTavern use, an early example was Tomoyo (Archive). There is also Monikaanon, who created a VN interface and even allowed his AI to interface with the online world.

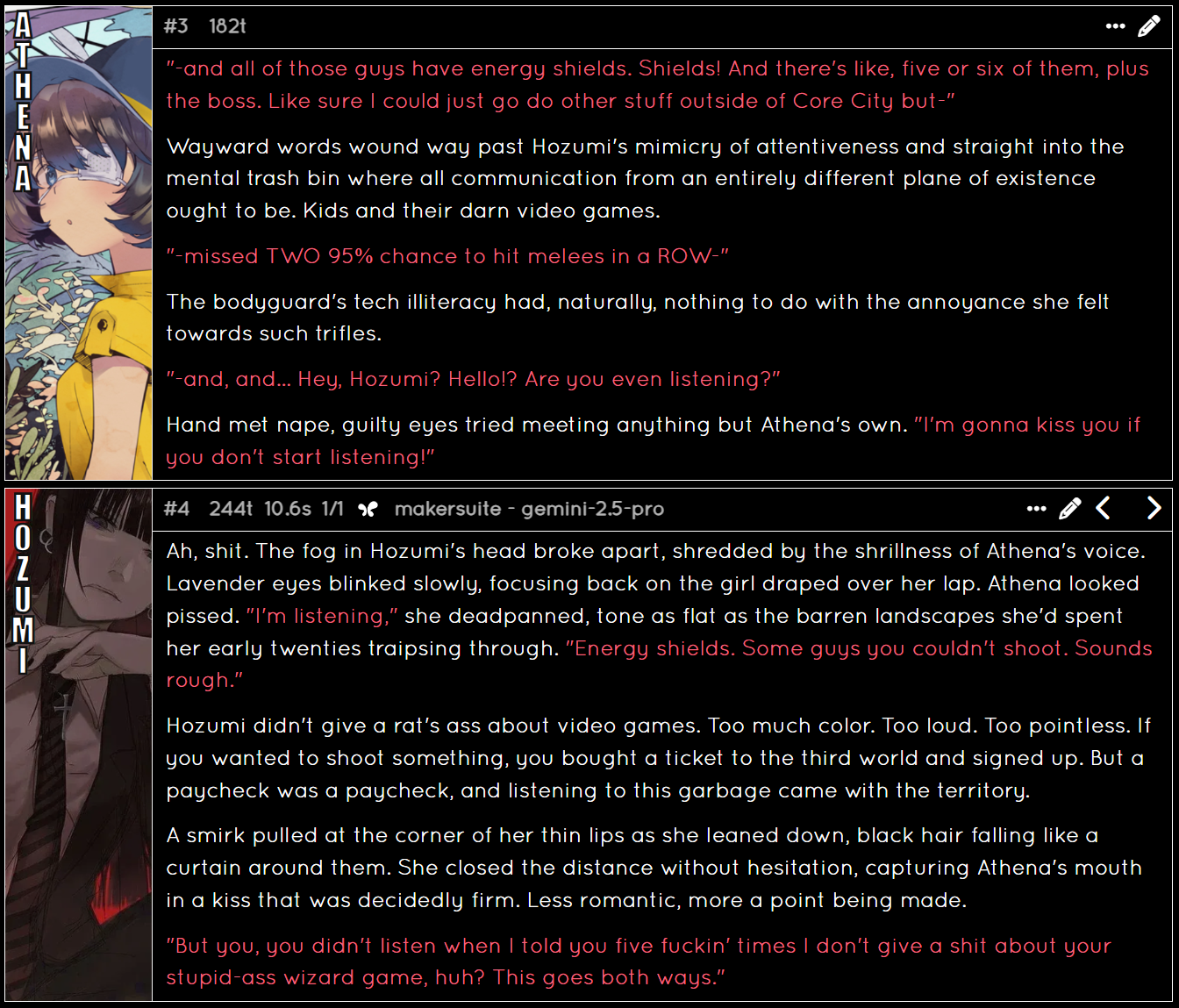

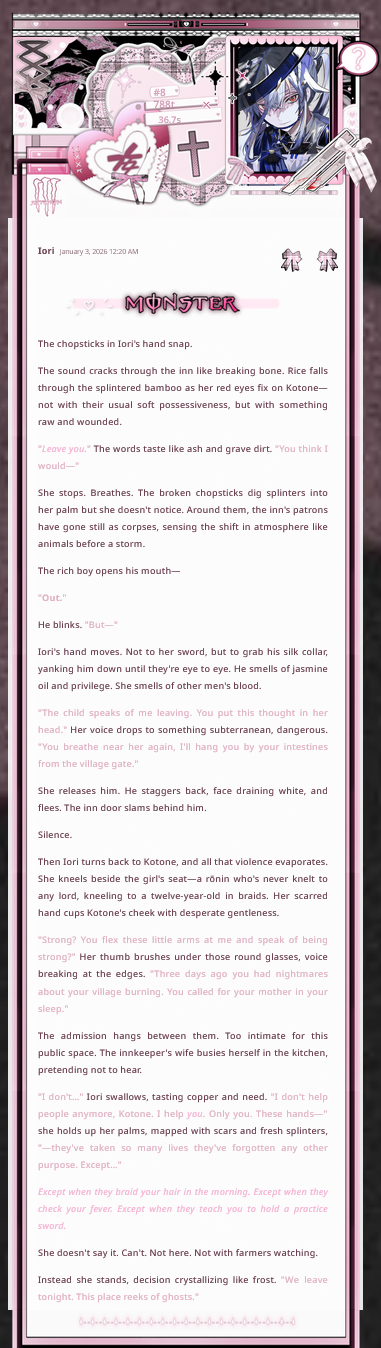

An LLM-driven VN may look and function like this.

If you don't mind horses, /chag/ has created an entire VN frontend free for public use here.

On the topic of things that aren't cards, there is also this repository (Archive) for prompts/injections that can put a fun spin on your current story.

Community survey

As to not just share my own opinions in this text, I decided to hold a community survey, contacting a large group of card creators and other contributors to my community. 21 people responded, and below are the results. But first I want to clarify that this is only a very small and specific sample of a community of a hobby. No "casual" chatbot user was consulted, nor was the survey open to the public. This was done for convenience, sadly. Basically, these people would have a stake in the hobby, and therefore more likely to give honest and insightful responses. I'm sure that there are non-creators with lots to say, but I am also sure that if you open up something like this to the public, you're going to get a bunch of people who will treat it as a joke.Anyway, here are the questions!

When were you introduced to chatbots, and when did you start using them?

This question was kind of pointless, in a survivorship bias kind of way. Everyone who responded to the survey is either active in the community, or has for some reason kept check on their email (for obvious reasons, most people aren't using their personal emails for contact in this hobby).

As you can see, a majority of the ones who responded started in 2022-2023. There could be a variety of reasons for this, such as AI RP being something new and exciting back then, but this will be discussed more in the sections about AI downsides and the future of the hobby. Same thing for the small amount of people who started in recent years.

For a lot of responders, they were first introduced to chatbots with AID, but didn't start using chatbots until later years.

One respondent had experience with chatbots for most of their life, mentioning things such as ELIZA and games with text parsing (similar to Façade mentioned before) like Galatea and Starship Titanic(Not a sponsor)

What made you interested in chatbots?

This one had a variety of responses, but I can divide them up in a few categories. With over half of the responses mentioning some form of this, the largest was interactive storytelling and freedom. The idea that you can create stories on the fly with whatever you'd like, and however you like really resonates with people. Other categories were things such as loneliness, the convenience of roleplay without having to deal with another person, and naturally, pornography.Do you use LLMs for something other than standard RP/chatbotting experiences?

Not much to say about this question, I was just interested in LLM usage beyond chatbots. For assistant tasks, I mean things such as fact checking/looking up info, reviewing text and more. Many seem to be wary of the dangers with relying on LLMs, and don't use it for anything vital.

Do you think your chatbot usage has changed since you started?

A poorly worded question. The most frequent response is that they RP less, which isn't unexpected, especially for those who have been around for long. Same thing for answers such as getting better at prompting, or becoming less emotionally invested.The Honeymoon Phase & Some Less Fun Stuff

This is something that's well-known by most chatbot users, and people who experienced it tend to describe it as magical. It's like a whole new world opens up to you, where you can talk to the computer, and it acts like a real person! Many describe it as giving you the same feeling of connection as you would with a real person.On a less fun note, this honeymoon phase is also something that can separate the sane from those less sane. People who keep thinking their LLM represent a real person long after having started to use them unfortunately exist, and these people may use the LLM's willingness to please the user to affirm certain beliefs they have.

There are those who use AI to delude themselves into thinking LLMs with solve everything in the world, which can be pretty comedic and a nuisance at worst, but then there are also those that use LLMs to delude themselves into hurting themselves or others.

In the end, the LLM is as much at fault as violent video games, guns and satanic books are at perpetuating harm. AI RP is just one of those antisocial hobbies that will naturally be blamed, even though the problem can be traced through these maladjusted people abandoning society for these hobbies where they can be accepted.

What would you say is the best thing about chatbots?

Coincidentally, the thing the vast majority of responses say is the best thing about chatbots is what I began with: freedom! I don't think I need to reiterate it once more, people really enjoy the freedom and potential to express creativity that LLMs offer. Along with that, most responses were just a rehash of what made people interested in chatbots in the first place.One person focused on gamification and mechanics, and only two people responded with writing cards. For the record, all but a few of those contact for this survey have made or are actively making cards currently. Perhaps some lumped in creation under the umbrella of freedom and creativity.

And the worst thing about chatbots?

Now this is one I can go into detail on. The top response at almost half or responses mentioning it was, unsurprisingly, "slop". Called LLM-isms by some, they're terms describing frequently used phrases, descriptions or sentence structures by LLMs. If you're using chatbots frequently, this can become very grating. So much so that some people use specific instructions in their presets to try and reduce them, or use regex scripts to outright hide or remove them.Below that is corporations focusing on making models that are good at coding and assistant tasks, and nothing else. This has been a trend for years now, and seems to show no sign of stopping. Going hand-in-hand with that are also memory issues and writing degradation over longer RPs. Writing degradation can be quite bad, as LLMs have a way of getting fixated on certain phrases or descriptions. Or even objects, deciding to constantly mention an unrelated, irrelevant one just because it did that the previous reply, and the previous one before that, and so on...

People also bring up the price, to many, high-end models are priced much higher than they're actually worth, especially if you're going to use them for chatbots.

Another complaint is that LLMs are bad at reading between the lines, and that they sometimes don't pick up things that a human RP partner would. On the topic of RP, there was also the worry of AI being too convenient, and that it's eclipsing shared experiences through RP simply by being available all the time.

What do you think drives people to use chatbots?

The replies to this one was funny, because I could easily categorize all responses into mentions of three different reasons, those you see above. And I think they're right, those feel like they're the big three of why someone would be getting into chatbots. Loneliness leans more into the social aspect, erotica into pleasure-seeking, and freedom into the creative aspects. Of course, they all overlap a bit, but I think that speaks to the flexibility of the hobby. Some people get insanely creative and intricate with their smut, while others see a card of the most whoreacious woman in the world and go "I can fix her.". Once again, it's all about freedom and what people do with that freedom.Where do you think the hobby is headed?

People don't seem to be very optimistic. An equal amount of people expressed that models are getting worse, and that in order for the hobby to get any better, companies will need to start releasing models that focus on creative writing.Some expressed hope, and some suggested that in the future, LLMs will be integrated with other mediums.

And now for our one and only quote on this whole page to round things out:

"We're not in the dark ages until targeted ads appear during aftercare" - Chefseru